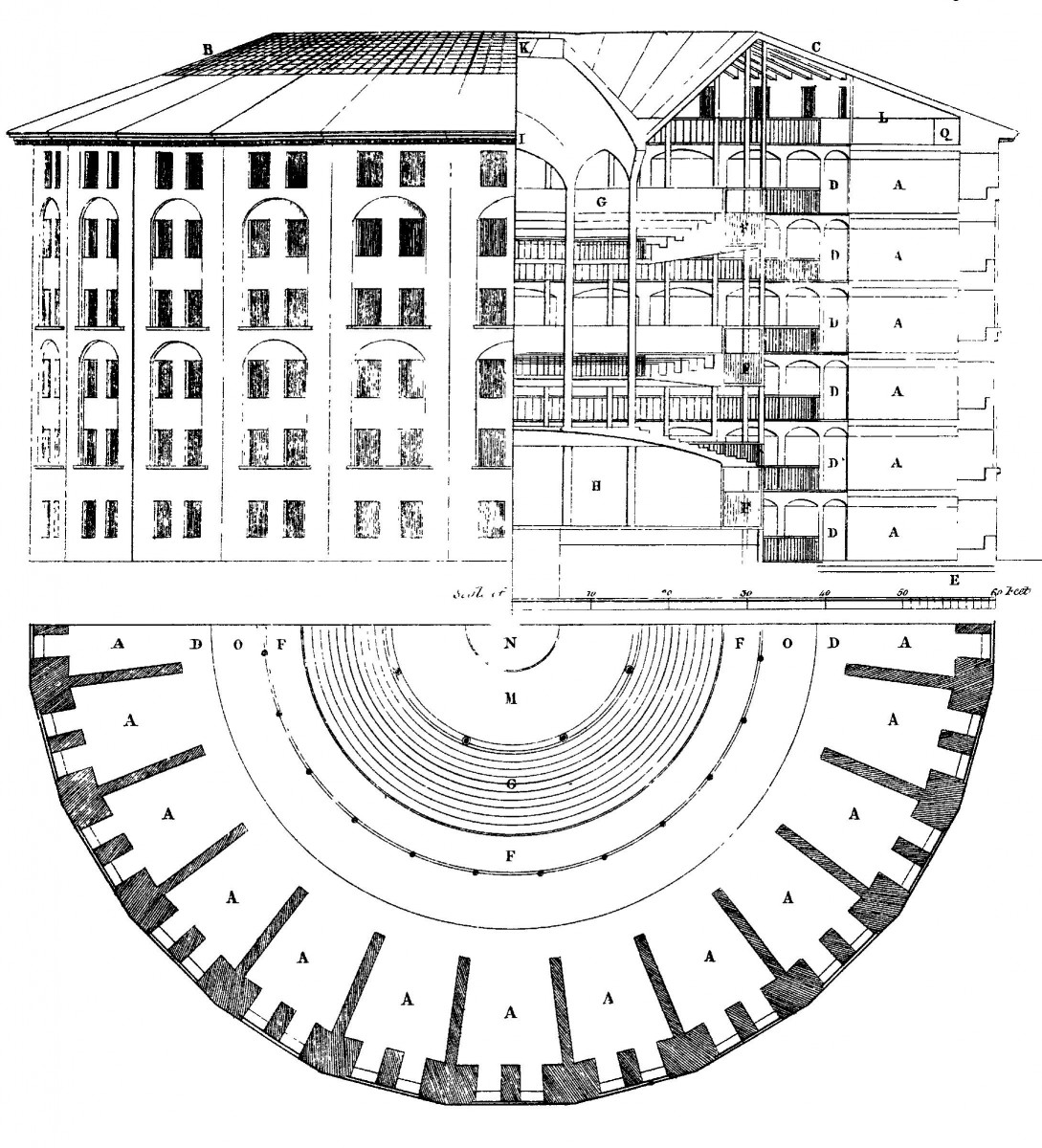

Image source: Jeremy Bentham [Public domain], via Wikimedia Commons

If, however, we did choose to keep this data, it would allow us to do some useful things. We would, for example, be better able to determine who may have damaged an item if we failed to notice a problem upon checking it in. With some additional programming, we might be able to build on top of this data a recommendation system that would enable us to suggest other materials to our readers based on the habits of similar patrons. We might conceivably also be able to gather aggregate usage statistics to see how our collections are used by discipline, by class year, or even, perhaps, by GPA, and to explore if there is any relationship between academic achievement and use of our resources. These potential uses are all very enticing, and might make our currently rather pedestrian catalog a bit more exciting and interactive.

We won’t be attempting any of that.

I write this a few weeks after Mark Zuckerberg, Facebook’s CEO, was summoned to Washington for two days of testimony in the wake of the disclosure that Cambridge Analytica had used Facebook’s API to collect personal data on hundreds of thousands of Americans as a means to influence the 2016 presidential election. As we’ve known for decades, when a service is free to a consumer, the consumer is not really the customer, but is instead the product; the actual customer is the advertiser (or political operative) that is paying for access to the personal data of the service’s users. Increasingly, the systems we buy come configured in a way to encourage us to collect massive amounts of data about our community. Computers are really, really good at doing this sort of thing. In turn, we need to also get really, really good at thinking about our values, our core principles, and the ramifications of the seemingly small choices that we make as we procure and configure these systems. And we need to be clear with our community about what data we are collecting, how we are protecting it, who has access to it, and how it is being used.

In our new strategic framework, we’ve identified critical digital fluency as one of the core elements of a Middlebury education. We’ve begun a series of workshops and conversations that will help us as a community develop a shared vocabulary about what critical digital fluency means, and what it looks like in terms of both technical skills and habits of mind. This is a timely development. We live and work in a world that is increasingly mediated by technology, and have to make choices both large and small that collectively result in how we live out all dimensions of our personal and professional lives. Creating community norms around privacy and data, and learning to think critically about what technologies we choose, and how we choose to configure them, must be a core part of our collective development of this newly defined fluency. In addition to being thoughtful, critical consumers of technology, we can also, through collective action, identify opportunities to positively influence the future direction of the technologies that we increasingly rely upon, to reclaim the scholarly commons, to resist and reject models where we are the product, and to help build new infrastructures that support our values.