By Christopher Andrews

Assistant Professor, Computer Science

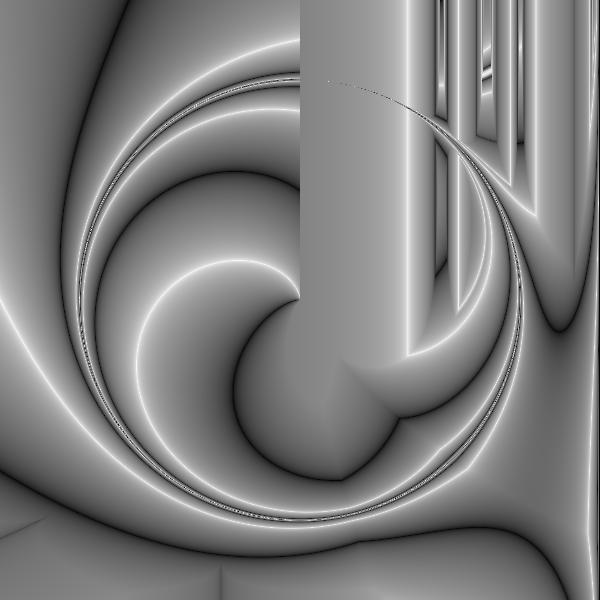

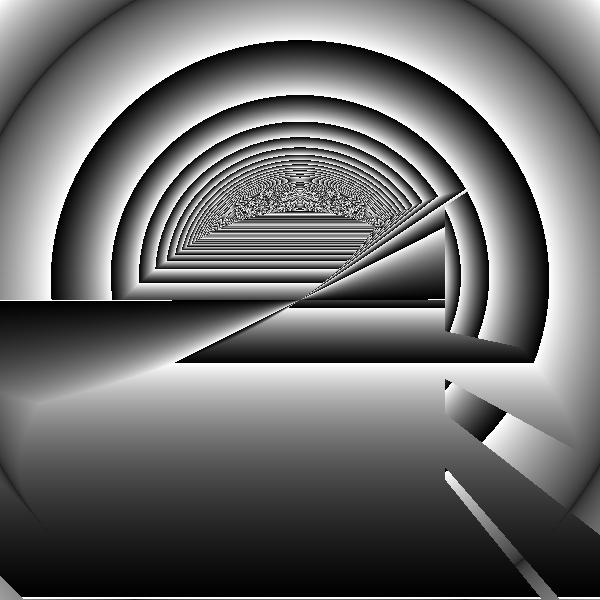

Over the past year and a half or so, I have been working to develop an evolutionary art tool based on expression trees. The idea itself is a fairly old one, which can be traced back work done by Karl Sims’ in the early nineties. The underlying idea is that given an arbitrary equation with respect to two variables, X and Y, we can generate an image. Mechanically, we consider the image to lie on the X,Y plane, such that each pixel of the image has a unique (X,Y) coordinate. To draw the image, we visit each pixel of the image and solve the equation using the pixel’s values for X and Y.

This process becomes more interesting when we introduce evolution to it. The computer generates a collection of random equations. The user then selects the most interesting images, and these become the progenitors of a new generation of images. The images can “mate,” swapping pieces portions of their underlying equations, or images can be mutated, the underlying equation changed by swapping variables, changing functions, or even the introduction of complex transformations like mirroring. The user is presented with the new generation, and the cycle starts anew.

Some of the images produced by this process are dross—blank or a confused mass of random noise. Others however, are quite compelling. There are patterns and shapes that emerge, arrangements that can seem familiar yet alien. There is something that draws me to continue to keep iterating, chasing the next surprise.

And yet, there is something vaguely unsatisfying about the process as well. Conceptually, there is an equation that could produce any arrangement of pixels, from a blank slate to the Mona Lisa. The genetic algorithm that drives the process is essentially a search algorithm, exploring the space of all possible images. Leaving aside the (not unimportant) question of whether or not these images are art, we have to ask who the artist is? Karl Sims saw the user as the artist, the act of driving the process akin to being a gardener. Alan Dorin, however, is more dismissive, asking if we would still have respect for Picasso if he produced his works by walking into a Library of Babel filled with images instead of books, wandering the stacks and emerging with a random painting he found that struck his fancy.

One solution to this is to replace the human altogether. The human’s job in this system is evaluation—determining the “fitness” of each image. If some form of aesthetic judgement could be performed by the computer, the entire process could be automated and we could explore the realm of computational creativity. I have been exploring in the other direction. What if the user could be more informed about the process, able to direct the process more? What if we could introduce the potential to attain mastery and to make choices backed by intentionality?

To that end, I have been applying the techniques of visual analytics to this process. The current attempts are centered around “spatializing” the images, arranging them in space based upon some metric or attribute of the image. This should make it easier for the user to quickly evaluate a large collection of images, as well providing new ways to specify to the computer directions to explore based not on random permutations but upon actual features to be accentuated or diminished.

With funding from the DLA, I was able to hire two students last summer to help me begin identifying ways to classify images: Selena Ling and Fiona Sullivan. Selena worked on a machine learning approach to identifying what we are calling “mid-level” image attributes, such as “curviness.” While we have had some early success classifying the degree of curviness of images produced for training, the classifier continues to struggle to produce results that would agree with a human assessment for the more complex images produced by the evolutionary art tool. Fiona’s contribution was to develop a survey asking participants to describe some of these images with an eye towards determining some additional mid-level attributes. While the results were quite varied, we found that there was a clear separation between images that participants described in terms of their structure and images that participants responded to more metaphorically, seeing representations of shapes or emotions. This was not perhaps what we were initially after, but it suggests new avenues to be explored.

Moving forward, we will be taking these varied results and trying to turn them into a useful set of metrics that can be applied to arbitrary images with the ultimate goal of producing a system that is not an independent computer artist, nor a tool like Photoshop, but a collaborator with neither the computer nor the user solely in charge.